2021 Model Status and Whitepaper

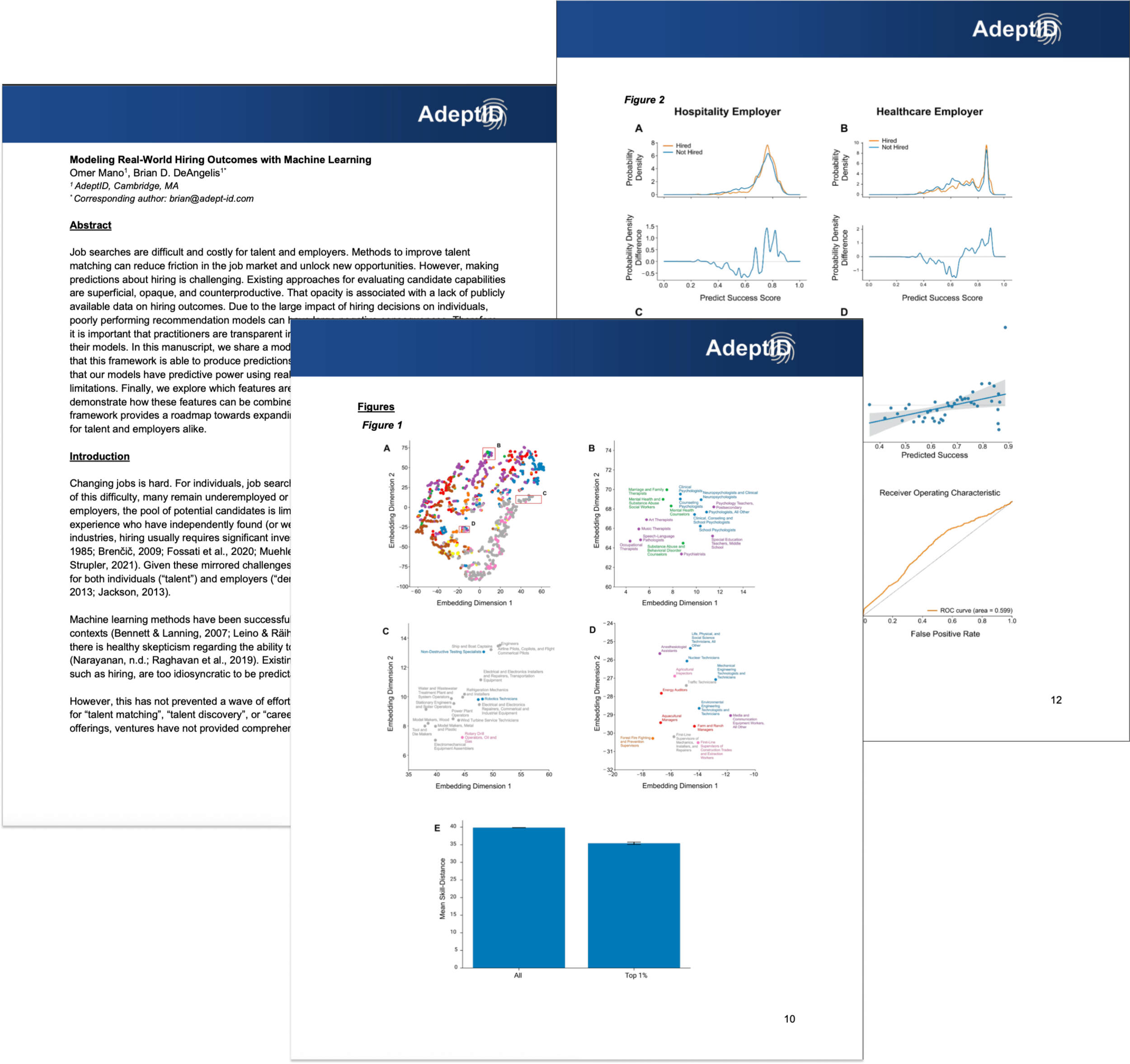

As the year draws to a close, we’re pleased to release a whitepaper presenting the status of our modeling efforts. This transparency around the actual performance of our algorithms is a critical part of our company values and, we believe, an important differentiator between ourselves and other attempts to characterize talent using AI/ML.

First, a bit of context (or: a reminder on what problem we’re trying to solve)…

As we try to understand the future of work, one thing is obvious: Algorithms will have a dramatic influence on how hiring decisions are made. As such, the question at hand isn’t “Will AI become integral to talent matching programs,” but rather, “How can we best incorporate AI to solve for transparency as we match talent to opportunities?”

Job searches prove difficult and costly for both talent and employers. Existing approaches for evaluating candidates are problematic, superficial, and opaque.

Technology’s inability to help (and in fact its tendency to hurt) is, in great part, due to the poor quality of publicly available data on hiring outcomes.

Many individuals remain underemployed or employed in jobs that are unsatisfying because of the inefficiencies plaguing the hiring process. For employers, the pool of potential talent is limited to individuals with conspicuously relevant experience who have independently found (or were recruited for) open positions. Hiring usually requires a significant investment of time and resources.

Methods to improve talent matching with algorithms can reduce friction in the job market and unlock new opportunities, but making predictions about hiring is a challenge that those across the talent supply chain all face. Poorly performing recommendation models can have massive negative consequences – and there’s plenty of evidence to suggest that they already are.

It is critical that any developer of algorithms for talent is transparent in reporting the structure and performance of their models.

Several commercial ventures have invested heavy resources into creating machine learning-based solutions for “talent matching”, “talent discovery”, or “career pathing.” Despite these offerings, these organizations have not provided comprehensive analyses of their modeling framework and the corresponding performance of their models, so the overall design and accuracy of their systems remain unknown to public audiences. That opacity is cause for concern.

We will do it differently.

Here’s where we’re at…

As of December 2021, we can say that AdeptID’s early models provide modest predictive power. Our methodology and framework suggest a clear path towards deeper insights and improved performance.

By combining predictive features, creating increasingly sophisticated synthetic training data, and aggregating hiring observations across employers, we expect future models and associated tooling to be transformative to both individuals and employers alike, bringing transparency to the hiring process, and creating a more diverse, represented workforce.

To learn more about our modeling and accuracy metrics in this accompanying whitepaper, published by AdeptID’s Brian DeAngelis (Chief Data Scientist and Co-Founder) and Omer Mano (Research Data Scientist).